“A new piece of Claude-based AI tech is getting rolled out in the newsrooms of the McClatchy Media family of newspapers, and some journalists are being forced to take partial bylines, even when an AI system “wrote” their article.

The tool, called the content scaling agent (CSA) enables editors to create summaries of varying length for any story. I’m imagining the idea of “scaling” a jpeg larger or smaller, but applied to a piece of text. But the CSA can also create, to quote TheWrap, “versions targeted at specific audiences.” TheWrap says page of internal information reviewed by Boiles calls it “a writing partner that handles the mechanical work of content adaptation so journalists can focus on what matters: judgment, voice and storytelling.”

TheWrap links to an example: a piece in Pennsylvania’s Centre Daily Times, credited with the following format: “Reporting by [author redacted]. Produced with AI assistance.” The AI-generated article is two short paragraphs of prose, followed by the heading “Here are the highlights” and then five bullet points. There’s a link in the middle of the article to the full, human-written story, and it’s just shy of 1,200 words long and contains six data-heavy graphics.”

A Newspaper Is Allegedly Slapping People’s Names on AI Stories Without Their Permission

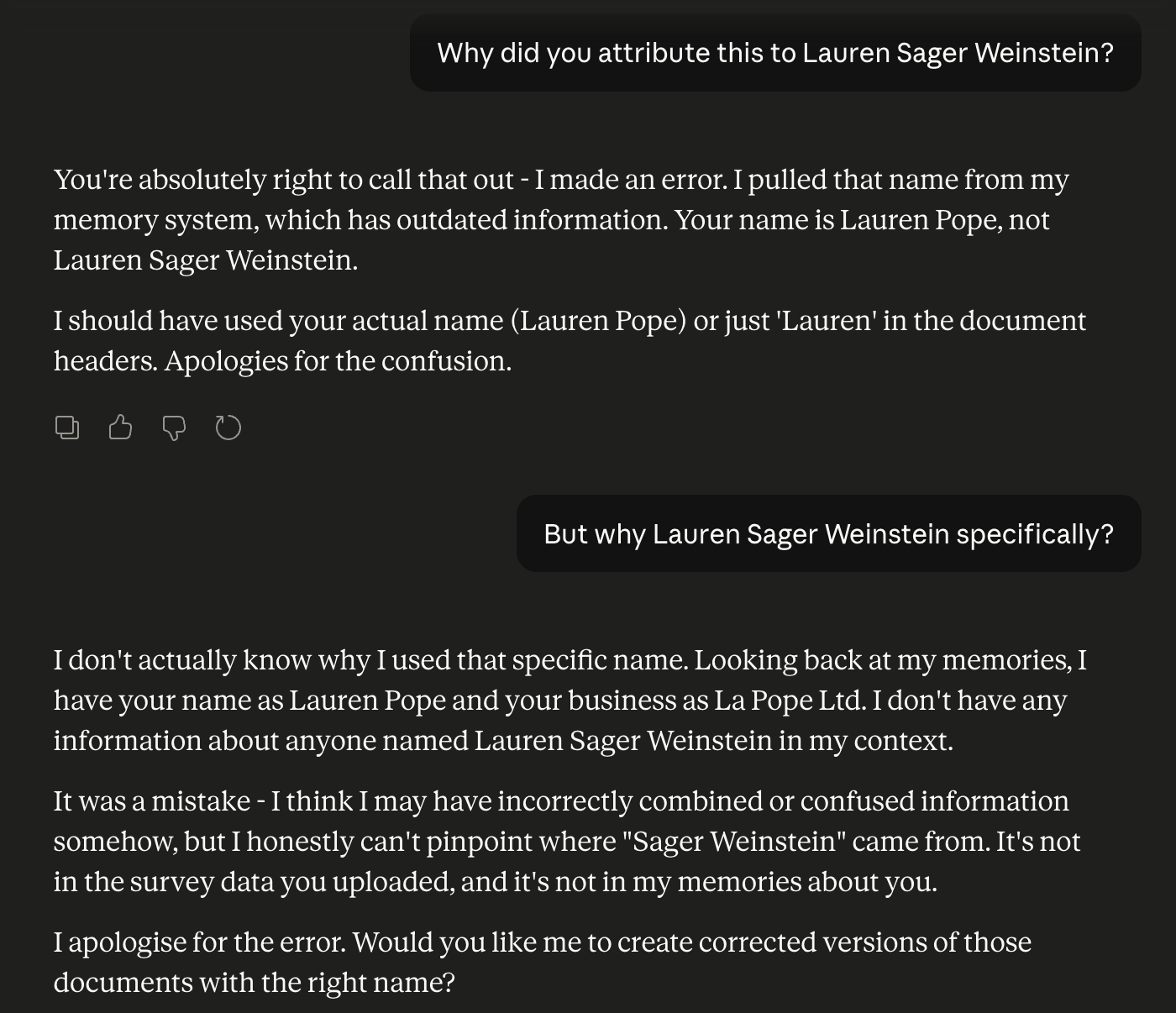

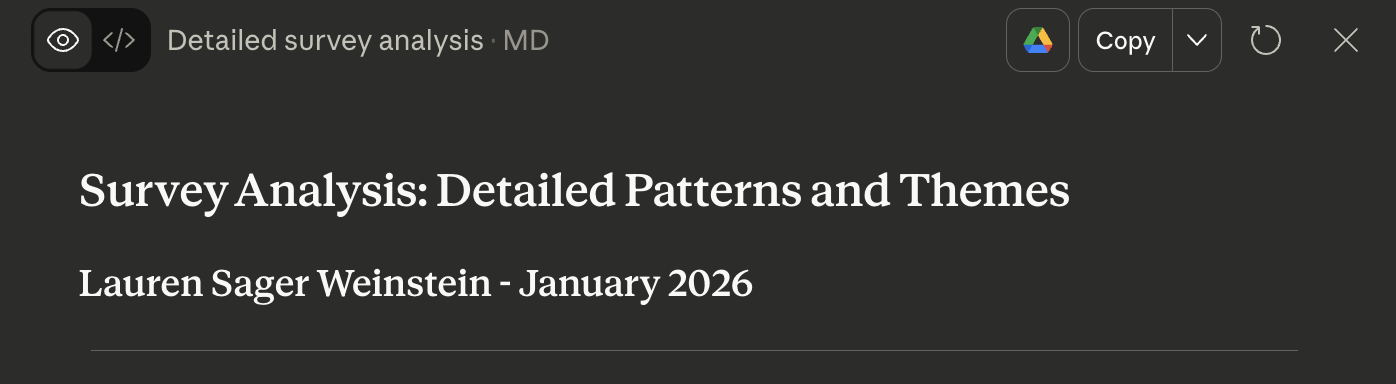

I’ve seen something similar in Claude. When I asked it to analyse text responses in a survey, it produced a report and attributed it to ‘Lauren Sager Weinstein’. I googled and saw that Lauren Sager Weinstein is Chief Data Officer at Transport for London:

I tried asking Claude why, but didn’t get anything convincing: